Article

What Is AI Assurance and Why Does It Matter?

Mike Reeves, PhD

|

Updated on

|

Created on

Artificial intelligence (AI) models are not static pieces of software. They learn and evolve as they process new data, which means their performance can change over time. This dynamic nature makes traditional, one-time testing insufficient for managing long-term risk. A model that is fair and accurate today may develop biases or performance issues tomorrow. This is why a continuous approach to oversight is necessary. AI assurance provides this ongoing lifecycle management. It moves governance from a reactive, point-in-time audit to a proactive process of continuous monitoring, validation, and improvement, ensuring your systems remain reliable and compliant.

Key Takeaways

Treat AI assurance as a continuous cycle: Artificial intelligence systems change over time, so assurance cannot be a single event. Implement ongoing monitoring to manage risks like model drift and maintain compliance with evolving standards.

Build trust with concrete evidence: Stakeholders need proof that your AI is fair and reliable. Use practices like bias detection, detailed audit trails, and explainable models to provide clear, verifiable documentation.

Start with a clear governance structure: Effective AI assurance requires defined roles and responsibilities. Create a cross-functional team from compliance, IT, and business units to ensure comprehensive oversight from the beginning.

What Is AI Assurance?

AI assurance provides a structured way to verify that artificial intelligence systems are trustworthy and operate as expected. It covers the methods and evidence needed to build confidence in AI.

Define AI Assurance and Its Scope

AI assurance is a set of practices used to check that artificial intelligence (AI) systems are dependable and safe. It helps organizations confirm their AI models operate ethically and reliably throughout their entire lifecycle.

The goal is to build trust among everyone involved, from developers to customers. The UK government offers a helpful introduction to AI assurance, describing it as a way to measure and verify that AI systems work as intended.

This process involves evaluating performance and managing risks. It also ensures the system complies with relevant rules and standards. It provides documented proof that an AI system is fair, transparent, and accountable.

How AI Assurance Differs from Traditional Software Testing

AI assurance is different from traditional software testing. Software testing often happens at a single point in time, usually before a product is released. AI assurance, however, is a continuous process.

According to MITRE Corporation, this involves ongoing model monitoring and regular audits. Artificial intelligence models are not static; they learn and change as they process new data.

This dynamic nature means that a one-time check is not enough to manage risk. As The Center for Audit Quality notes, auditors can provide assurance services to independently check how companies govern their AI. This helps build public confidence.

Why Your Organization Needs AI Assurance

Adopting artificial intelligence (AI) offers significant advantages, but it also introduces new responsibilities. AI assurance provides a structured way to manage these duties. It helps your organization handle risks, comply with rules, and build confidence among stakeholders.

Mitigate Risks in AI Systems

AI systems can create risks that traditional software does not. These include issues with safety, reliability, privacy, and security. AI assurance helps organizations manage these potential problems.

A formal assurance process provides a framework to address fairness and AI bias, ensuring models operate as intended. Without it, your organization could face flawed decision-making or data breaches. This can damage your reputation and lead to financial loss. A structured approach makes sure your AI systems are transparent and can be trusted.

Meet Regulatory Requirements

Governments are creating new rules for artificial intelligence. AI assurance helps your organization show it is following these regulations. This includes existing data protection rules and new, AI-specific requirements.

Frameworks like the NIST AI Risk Management Framework provide guidance on responsible AI development and use. By implementing an AI assurance program, you can demonstrate due diligence to auditors and regulators. This proactive stance helps you stay ahead of compliance obligations and avoid penalties for non-compliance.

Build Stakeholder Trust

Many people find it hard to trust AI because its decision-making process can be unclear. This is often called the "black box" problem. Building trust is essential for customers, partners, and employees.

AI assurance makes your systems more transparent. According to The Center for Audit Quality, assurance services can help by independently verifying how companies manage their AI. This provides clear evidence that your AI operates ethically, fairly, and securely. This proof is critical for earning and maintaining the confidence of everyone who interacts with your organization.

Core Components of an AI Assurance Framework

An effective artificial intelligence (AI) assurance framework provides a structured way to manage risks and build trust. It is not a single activity but a collection of related practices that cover the entire lifecycle of an AI system. Each component addresses a specific aspect of AI performance, from initial design to ongoing operation.

According to the MITRE Corporation, AI assurance uses "systematic techniques, processes, and tools" to confirm that AI systems are reliable and operate as intended. These core components work together to create a complete picture of an AI system's trustworthiness, helping organizations meet regulatory standards and stakeholder expectations. A strong framework moves assurance from a reactive, audit-focused task to a proactive, continuous process. This approach ensures that governance is embedded throughout the development and deployment of artificial intelligence systems, rather than being an afterthought.

Verification and Validation

Verification and validation are foundational to AI assurance. Verification confirms that the AI model was built correctly and meets its design specifications. Validation ensures the model solves the right problem and meets the needs of the business and its users.

These processes are not a one-time check before deployment. They must be applied throughout the AI system's lifecycle. This includes evaluating the data used for training, testing the model's logic, and confirming its outputs align with expected outcomes. This continuous evaluation helps ensure the system remains safe, secure, and reliable over time.

Safety and Security

AI systems can introduce new vulnerabilities and safety concerns. The safety and security component of an assurance framework focuses on identifying and mitigating these risks. This involves assessing the system for potential weaknesses that could be exploited, such as data poisoning or adversarial attacks.

As consulting firm PwC notes, evaluating AI systems for these risks is critical, especially in high-stakes fields like healthcare or finance. The goal is to ensure the system does not cause unintended harm or produce unreliable results when faced with unexpected inputs. A secure system is one that is resilient to both internal failures and external threats.

Transparency and Explainability

Many AI models operate like a "black box," making it difficult to understand their internal logic. Transparency and explainability are designed to open that box. Transparency provides visibility into how a model is designed, trained, and deployed. Explainability focuses on making an AI model's specific decisions understandable to humans.

Providing this insight is essential for building trust with regulators, auditors, and internal teams. When stakeholders can understand the decision-making process, they can more easily verify that the system operates fairly and aligns with organizational policies. This is a key step in establishing accountability for automated decisions.

Bias Detection and Mitigation

AI models learn from data, and if that data contains historical biases, the model can replicate or even amplify them. Bias detection involves actively testing AI systems for unfair or discriminatory outcomes against certain groups. This is a critical part of ensuring fairness in AI.

Once bias is identified, mitigation involves taking steps to correct it. This could mean adjusting the training data, modifying the algorithm, or implementing post-processing controls on the model's outputs. Monitoring for and reducing discriminatory outputs is a fundamental aspect of responsible AI governance.

Continuous Monitoring

An AI model's performance is not static. It can degrade over time as real-world data patterns change, a problem known as model drift. Continuous monitoring involves tracking the system's performance after it has been deployed to catch these issues early.

This ongoing oversight ensures the model remains effective, reliable, and fair throughout its operational life. By tracking key performance metrics and risk indicators, organizations can maintain the integrity of their AI systems. This practice is vital for preventing performance degradation and ensuring the system continues to deliver value safely and reliably.

What Techniques Does AI Assurance Use?

AI assurance uses a set of practical techniques to verify that artificial intelligence systems work correctly and safely. These methods are not one-time checks. Instead, they are applied throughout the system's entire lifecycle, from design to deployment and beyond.

An effective assurance program combines several key practices. These include rigorous testing, continuous observation, detailed record-keeping, and performance measurement. Together, these techniques provide a structured way to manage risks and build confidence in AI outputs. They help organizations confirm that their systems meet both internal standards and external regulatory requirements.

Model Testing and Validation

Model testing and validation are fundamental to AI assurance. These processes confirm that an AI system behaves as intended and produces accurate, reliable results. The process involves a series of checks to evaluate the model against predefined requirements.

The MITRE Corporation notes that assurance uses systematic techniques to verify that AI systems are safe, secure, and ethical. Testing evaluates a model’s performance, fairness, and robustness. For example, tests can check for biases in decision-making or assess how the system handles unexpected data. This step is critical for identifying potential failures before the system is deployed.

Automated Monitoring

Once an AI model is deployed, its performance must be watched continuously. Automated monitoring uses specialized tools to track the system’s behavior and outputs in its live environment. This is an ongoing process to ensure sustained reliability.

The main goal is to detect and alert teams to any performance degradation. This can happen if the data the model receives changes over time, a concept known as data drift. Continuous model monitoring helps catch these issues early, allowing for timely intervention. It also helps identify potential security vulnerabilities or unintended behaviors that may emerge after deployment.

Audit Trails and Documentation

Clear and complete documentation is a cornerstone of trustworthy AI. Audit trails and documentation create a detailed record of an AI system’s entire lifecycle. This practice is essential for transparency, accountability, and regulatory compliance.

Comprehensive documentation should explain where the system’s data came from, how the model was built, and the logic behind its decisions. An audit trail logs all activities, changes, and outputs, making it possible to trace a specific result back to its origin. This level of detail is crucial for internal governance, external audits, and explaining the system’s behavior to stakeholders.

Performance Benchmarking

Performance benchmarking establishes a clear standard for an AI system's success. It involves defining specific, measurable metrics to evaluate the model's performance against a set baseline. This baseline could be its initial performance level or an industry standard.

By regularly comparing the system's live performance to these benchmarks, organizations can objectively assess its effectiveness. This continuous performance monitoring helps teams understand if the model is still meeting its objectives. If performance drops below the established benchmark, it signals that the model may need to be retrained or adjusted. This data-driven approach ensures the AI system continues to provide value.

How to Build Trust in AI with Assurance

Building trust in artificial intelligence (AI) systems is not automatic. It requires a deliberate and structured approach. An AI assurance framework provides the necessary processes to verify that your AI systems are fair, reliable, and transparent. By implementing these practices, you can give stakeholders the confidence they need to trust your technology.

This involves making systems understandable, engaging with the people they affect, proving your ethical commitments, and continuously monitoring performance.

Ensure Accountability and Transparency

Accountability starts with clarity. For stakeholders to trust an AI system, they need to understand how it operates and why it makes specific decisions. This is where transparency and explainability become essential.

AI assurance provides a structured way to confirm that your systems are dependable and clear. It helps you establish and follow rules for how AI is developed and used. According to the UK government, a key principle is providing appropriate transparency and explainability so people can understand the system’s reasoning. This clarity is fundamental for building trust with regulators, customers, and internal teams who rely on the AI’s output.

Engage with Stakeholders

Trust in AI is also a social challenge. Stakeholders, including customers, employees, and regulators, often have valid concerns about fairness and potential bias in AI systems. Addressing these concerns head-on is a critical part of building confidence.

Engaging with stakeholders helps you understand their expectations and worries. Building trust in AI requires addressing ethical questions about how the technology is applied. An AI assurance framework creates a formal process for evaluating your systems against these ethical principles. It helps your organization translate high-level goals like fairness into concrete, measurable controls that you can report on to stakeholders.

Demonstrate Ethical AI Practices

Making claims about ethical AI is not enough. Organizations must be able to demonstrate that their practices align with their principles. AI assurance provides the evidence needed to prove your commitment to responsible AI development and deployment.

The process covers the entire AI lifecycle, from initial design to ongoing operation. It evaluates both technical performance, like accuracy, and ethical considerations, such as fairness. This comprehensive approach ensures that your systems do not create unfair outcomes for individuals or groups. By using an AI assurance framework, you can systematically check for and correct issues, providing tangible proof of your ethical practices.

Commit to Continuous Improvement

AI systems are not static. Their performance can change over time as they process new data, a phenomenon known as model drift. Because of this, trust cannot be earned with a one-time check. It requires an ongoing commitment to monitoring and improvement.

AI assurance is a continuous lifecycle, not a single event. It involves constant performance monitoring to ensure systems continue to operate as intended. This ongoing oversight helps you catch and fix problems before they impact stakeholders. According to MITRE, this approach of continuous lifecycle assurance is vital for maintaining system integrity. This sustained effort shows stakeholders that your organization is dedicated to upholding its standards over the long term.

Common Challenges in AI Assurance Implementation

Implementing an AI assurance program is essential for managing risk and building trust. However, many organizations encounter significant barriers along the way. These challenges often involve technical complexity, a shortage of specialized skills, limited resources, and data security concerns. Understanding these common hurdles is the first step toward creating a plan to overcome them.

Technical Complexity and "Black Box" Models

Many AI systems operate as "black boxes," meaning their decision-making is not easily understood. The CAQ notes this lack of transparency makes it difficult to build trust in AI. If you cannot explain how a model reached a conclusion, you cannot verify its accuracy or fairness.

This opacity is a major challenge for assurance. It becomes hard to detect hidden biases or errors in the model's logic. Without clear insight, validating compliance with internal policies and external regulations is nearly impossible.

Lack of In-House Skills and Expertise

AI assurance requires a unique blend of expertise in data science, cybersecurity, and compliance. Finding individuals with this knowledge is difficult. A UK government report found that a lack of skilled people is a primary challenge for AI governance.

This skills gap can prevent organizations from maintaining a robust assurance framework. Without the right talent, companies struggle to interpret model outputs, conduct audits, and manage AI systems responsibly.

Resource and Budget Constraints

An AI assurance program requires a significant investment in tools, training, and ongoing monitoring. Many organizations find it difficult to secure the necessary budget. Foundational security for AI is often still a work in progress, according to research from Domino Technologies, Inc.

This makes funding advanced assurance an even greater challenge. When leadership does not fully understand the risks, it is hard to justify the costs. This can leave critical systems without proper oversight.

Data Privacy and Security Risks

AI systems are often trained on large datasets containing sensitive information. Protecting this data is a critical task. An assurance program must address risks like data breaches and improper usage.

According to GOV.UK, an effective framework helps identify and reduce these risks, including privacy issues and bias. Failing to implement strong controls can lead to non-compliance with regulations like the Health Insurance Portability and Accountability Act (HIPAA), financial penalties, and a loss of trust.

How Regulatory Frameworks Shape AI Assurance

Regulatory frameworks provide the structure for AI assurance programs. They offer guidance for organizations to manage AI risks and demonstrate responsible practices. As AI adoption grows, several key standards and frameworks have emerged to guide governance efforts.

ISO Standards for AI Governance

The International Organization for Standardization (ISO) released the first AI management system standard, ISO/IEC 42001:2023. It offers guidance for the responsible development and use of AI systems. The standard gives organizations a structure to manage AI-related risks. This helps ensure AI systems are technically sound and ethically aligned.

Adopting this standard helps organizations show strong risk management in their AI programs. This can build customer trust and support compliance with other AI frameworks. The standard also highlights how to integrate AI governance into existing controls. This includes areas like data governance and enterprise risk management, creating a more complete approach to AI oversight.

The NIST AI Risk Management Framework

The National Institute of Standards and Technology (NIST) created the AI Risk Management Framework to help organizations manage AI risks. It provides a structured approach for handling the challenges of AI technologies. The framework focuses on transparency, accountability, and ethical considerations in AI deployment.

By following the NIST guidelines, organizations can better assess AI risks. This helps them meet regulatory expectations and build trust in their AI systems. The National Institute of Standards and Technology framework is a critical tool for any organization working through the complexities of AI assurance and governance.

Industry-Specific Requirements

Many industries are now establishing specific requirements for AI governance and assurance. These rules reflect the unique challenges and risks found in different sectors. For example, fields like healthcare, finance, and transportation are developing tailored frameworks to guide the use of AI.

These industry-specific requirements help organizations manage the details of AI deployment. They ensure AI systems comply with relevant standards and ethical guidelines for that particular field. As AI technology becomes more common, these focused frameworks will be essential for managing risks and maintaining trust in AI applications.

How to Overcome Implementation Barriers

Putting an artificial intelligence (AI) assurance program into practice involves navigating technical and organizational hurdles. However, a structured approach can help your organization manage these challenges effectively. By focusing on clear governance, team education, collaboration, and the right tools, you can build a durable framework for trust and compliance. These steps help turn abstract principles into concrete actions, making AI assurance an achievable goal for any organization.

Develop a Clear Governance Framework

A strong AI assurance program starts with a clear governance framework. This structure defines the rules and processes for how your organization develops, deploys, and manages AI systems. A key part of this framework is establishing accountability. According to the technology consulting firm Holistic AI, this means ensuring people and companies are responsible for what their AI systems do.

Your framework should outline who is responsible for AI-related decisions and risk management. It creates a clear line of sight for oversight, from development teams to executive leadership. This structure provides the foundation for consistent, ethical, and compliant AI practices across your entire organization.

Invest in Team Training and Education

Many organizations face a skills gap when implementing AI assurance. Your teams, from technical staff to compliance officers, need to understand the risks and requirements associated with AI. The UK government’s Centre for Data Ethics and Innovation recommends that organizations help their teams learn about AI assurance to prepare for future needs.

This education should cover the fundamentals of AI, common risks like bias and data privacy, and your company’s specific governance framework. Training can take many forms, including internal workshops or partnerships with external experts. An informed team is better equipped to identify potential issues and contribute to a culture of responsible AI use.

Form a Cross-Functional Team

AI assurance is not just an IT or data science problem; it is a business-wide responsibility. A single department rarely has the complete perspective needed to manage AI risk effectively. As noted by Domino Technologies, Inc., a successful strategy requires creating teams with people from different departments to manage AI risks.

Your AI assurance team should include members from legal, compliance, IT, data science, and business operations. This cross-functional collaboration ensures that you consider risks from all angles, from technical validation to regulatory compliance and ethical implications. This holistic approach leads to more robust and comprehensive oversight of your AI systems.

Use Automation and Advanced Tools

Manually monitoring complex AI systems is not practical or scalable. As AI becomes more integrated into business operations, you need automated tools to maintain continuous oversight. These tools can track model performance, monitor data quality, and detect potential issues like algorithmic bias in real time.

According to The Data Exchange, an online technology publication, companies need new tools to monitor model performance and the quality of the data they use. An AI governance platform can automate evidence collection and validation against controls from frameworks like ISO 27001 or the NIST AI Risk Management Framework. This continuous monitoring helps you maintain audit readiness and demonstrate compliance on an ongoing basis.

How to Measure the Effectiveness of AI Assurance

An AI assurance program is only as good as its results. To understand its value, you need clear ways to measure its impact on risk, trust, and compliance. Effective measurement uses a mix of quantitative metrics and qualitative feedback. Tracking the right indicators helps demonstrate the program's effectiveness to leaders, auditors, and regulators, while also highlighting areas for improvement.

Define Performance Metrics and KPIs

Define specific performance metrics to measure your program. These Key Performance Indicators (KPIs) help track progress and show value. AI assurance is a continuous process, not a one-time check. MITRE Corporation notes this requires ongoing model monitoring to prevent performance degradation. Your KPIs should reflect this continuous nature. Track model accuracy, fairness metrics, and incident resolution times. These metrics provide concrete evidence that your assurance activities are working as intended and meeting business objectives.

Establish Risk Assessment Protocols

An effective program is measured by its ability to manage risk. Establish clear protocols for assessing potential dangers in your AI systems. The UK government explains that AI assurance helps find and reduce risks like bias or privacy violations. A formal risk assessment protocol defines how your team identifies and evaluates these threats. This process should be repeatable and integrated into the AI lifecycle. Tracking the number of risks identified and mitigated over time directly measures your program's success in protecting the organization.

Maintain Clear Documentation

Thorough documentation is another critical measure of an effective program. It provides a transparent record for auditors and regulators. According to Holistic AI, an AI Assurance provider, all details about an AI system should be recorded and accessible, including data sources and validation results. Well-maintained documentation proves your governance is systematic and creates an essential audit trail. This clarity is fundamental to building and maintaining trust in your AI systems, making it easier to respond to inquiries from internal and external parties.

Create a Stakeholder Feedback Loop

Finally, measure effectiveness by gathering feedback from key stakeholders. Building trust is a central goal of AI assurance. The Center for Audit Quality suggests that companies can build trust by sharing information about their AI use. A formal feedback loop helps you do this. Regularly share assurance reports with leadership, compliance teams, and external auditors. Their feedback confirms if your program provides the necessary confidence and transparency. This qualitative input is essential for refining your strategy and ensuring it aligns with stakeholder expectations.

How to Build Your AI Assurance Program

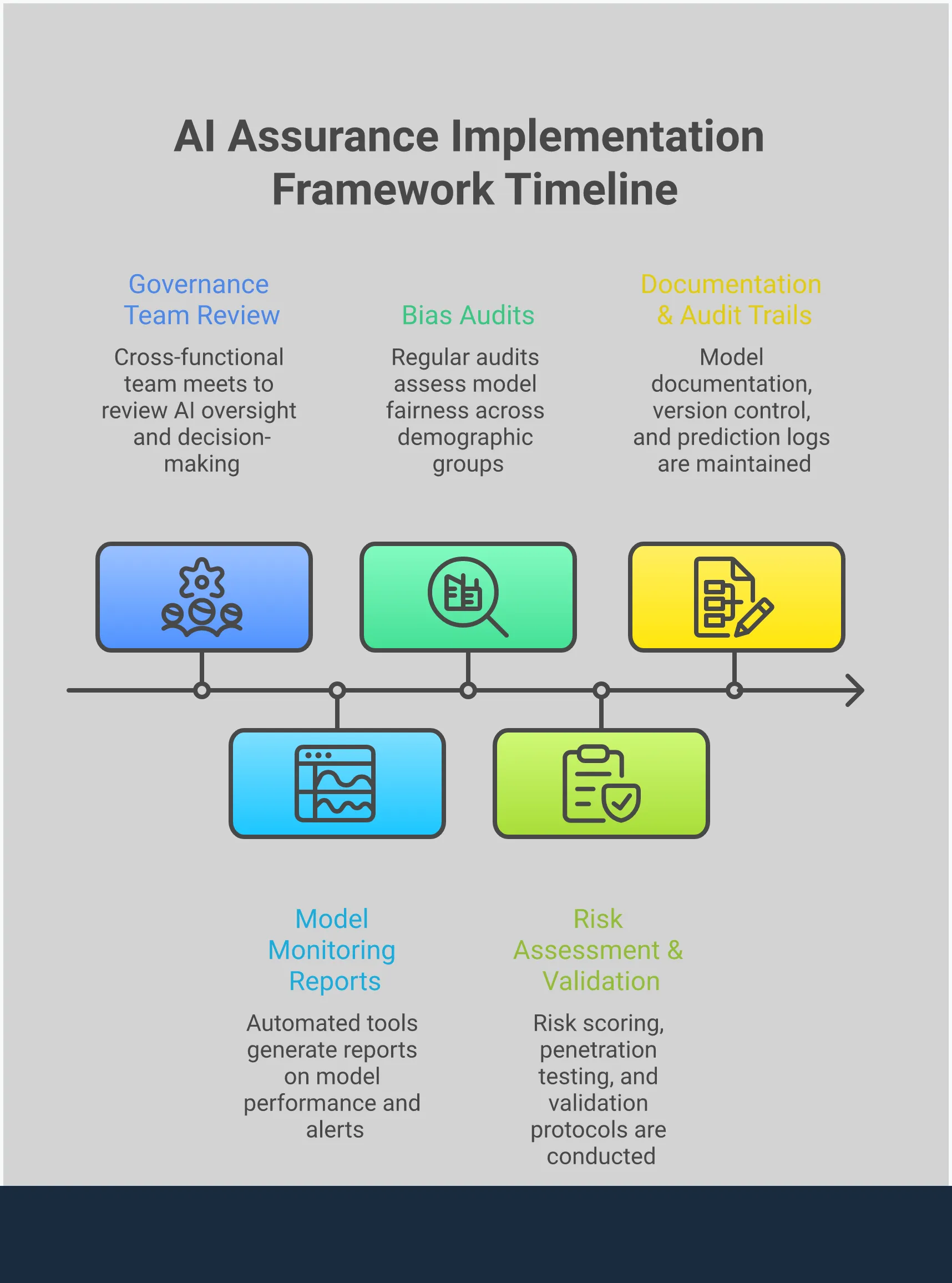

Building an effective artificial intelligence (AI) assurance program requires a structured approach. It involves understanding your AI systems, defining clear responsibilities, and committing to ongoing oversight. By following a clear process, your organization can create a program that builds trust and manages risk effectively.

The process can be broken down into four key stages: assessing your needs, implementing a strategy, defining governance, and continuous monitoring. Each step builds on the last to create a comprehensive framework for AI assurance.

Assess and Plan

The first step is to understand the scope of your AI usage and its potential impact. This involves creating an inventory of all AI systems across the organization and identifying the risks associated with each one. These risks could relate to data privacy, model bias, security vulnerabilities, or operational failures.

According to the UK government, the goal of AI assurance is to measure, check, and share information to show that AI systems can be trusted. This process helps confirm that an AI system works as intended, understands its limits, and manages potential risks. Your plan should define what "trustworthy" means for each system and outline the specific controls needed to achieve it.

Implement Your Strategy

Once you have a plan, the next step is to put it into action. This involves selecting the right tools, techniques, and processes to test and validate your AI systems. Your strategy should cover the entire lifecycle of each model, from development and deployment to ongoing operation.

AI assurance is a continuous process, not a one-time check. As the research organization MITRE explains, key practices include model monitoring, regular audits, and formal AI assurance plans. Implementing your strategy means integrating these practices into your existing workflows. This ensures that assurance activities are performed consistently and that results are documented for review by auditors and stakeholders.

Define the Governance Structure

A successful AI assurance program needs clear lines of authority and accountability. This requires a formal governance structure that defines who is responsible for overseeing AI systems and managing their risks. Without clear ownership, assurance efforts can become fragmented and ineffective.

Your governance framework should establish clear rules and structures that define who is responsible for what. This includes creating an oversight committee, assigning roles to specific teams, and establishing reporting procedures. A strong governance framework ensures that everyone understands their role in maintaining the integrity and trustworthiness of your organization’s AI systems. This structure provides the foundation for consistent decision-making and accountability.

Monitor and Improve Continuously

AI systems are not static. Their performance can change over time as they encounter new data or as business conditions evolve. Because of this, continuous monitoring is essential for maintaining trust and managing risk long after a model is deployed.

AI systems should be watched constantly to ensure they continue to perform as expected. This includes performance monitoring and error management to find, understand, and fix mistakes. An effective program includes processes for tracking key metrics, investigating anomalies, and updating models when necessary. This commitment to continuous improvement helps your organization adapt to new challenges and maintain a high standard of AI assurance.

Related Articles

AI Assurance FAQs

Table of Contents

Mike Reeves, PhD

Mike is a key figure at the intersection of psychology and technology. He has created and managed algorithms and decision-making tools used by more than half of the Fortune 100.